Artificial intelligence is rapidly transforming cybersecurity, helping organizations detect threats faster, automate responses, and strengthen their defenses like never before. But while AI brings clear advantages, it’s not without its downsides.

As businesses increasingly rely on AI-driven security systems, they also introduce new risks, some of which are often overlooked. From biased decision-making and false positives to adversarial attacks and overdependence on automation, AI can create vulnerabilities just as easily as it can prevent them.

Understanding these disadvantages is crucial. Without a clear view of the risks, organizations may unknowingly expose themselves to more sophisticated threats. This guide will show you the actual risks of AI in cybersecurity, where these risks come from, and how to handle them.

What is generative AI in cybersecurity?

Before getting into the downsides of AI, it helps to understand what we’re actually talking about when people say “AI in cybersecurity,” because not all AI is the same. People tend to lump everything together, but generative AI deserves its own spotlight.

Generative AI in cybersecurity refers to AI models that can create new content, code, phishing emails (fake emails), deepfakes (both audio and visuals), or even entire malware frameworks.

Using large language models, this AI automates security tasks like simulating attacks, creating synthetic training data, crafting plans for incident response, and even predicting how new threats evolve.

Unlike traditional AI that only spot threats by following laid down rules or looking for known patterns, generative AI creates new things based on learned data patterns. Now that’s both an advantage and a disadvantage. Why? Because defenders aren’t the only ones using it; criminals do too.

For defense, generative AI helps security teams to stay ahead of threats by modeling potential threats before they happen. On the offensive side? In the wrong hands, AI gets pretty dangerous.

Hackers use it to craft convincing phishing emails, make fake IDs to trick hiring systems, build malware that keeps changing shape, and spread disinformation. And it often works on a scale and speed that defenders struggle to keep up with.

One of the US Government Accountability Office’s reports on federal agency security incidents revealed something. It stated that improper usage by authorized users and phishing accounted for 38 percent and 19 percent of incidents reported. Both are areas where AI is now playing a deep and increasingly disruptive role.

The disadvantages of AI in cybersecurity you need to be aware of

Let’s take a closer look at some of the most important AI cybersecurity risks organizations face today. Some are technical. Some are organizational. All deserve serious attention, especially now that almost every sector is adopting AI.

1. False positives and false negatives are basically the most persistent challenges of AI security tools

AI-powered tools are supposed to sniff out anomalies, but half the time, the perceived threat is just harmless noise, like someone forgot their password, or Jim in accounting is up late organizing spreadsheets.

Sometimes AI systems send false positive alerts so much that security teams can’t tell the difference between a real threat and digital static. One of Torq’s research says 62% of security teams feel burned out due to alert overload, making it really hard to sift through the noise from real danger.

And then you’ve got the opposite problem: false negatives. That’s when legit threats slip right past, unnoticed, because the AI wasn’t looking in the right place, or its training data is stuck five cyberattacks in the past.

Analysts start to tune out after dealing with a million false warnings or irrelevant alerts. Trust in the system begins to erode, and then genuine attacks can slip through because no one is looking to catch them in time.

This isn’t theoretical; it’s a pattern security teams see often. If the system cries wolf too often, people will eventually stop believing it. And hackers love this because they’re banking on nobody paying attention when the alarms are going off.

What does this mean? For AI alert systems, there’s a need for careful tuning and ongoing calibration to see what works and fix what doesn’t. Organizations that aren’t reviewing false positive rates, refining detection thresholds, and keeping the signal sharp on a regular basis are basically inviting trouble and hoping for the best.

2. Over-reliance on AI can make people complacent

Something is appealing about AI doing everything for you and running continuously behind the scenes, scanning everything 24/7. Before long, employees become too comfortable with AI.

So, rather than treating it as just a tool, they start treating it as if it were a guarantee. They assume it has everything covered, and why should they double-check, after all, AI is monitoring everything, right?

As a result of this excessive dependence on AI, we lose our human component of cybersecurity. The team is no longer monitoring or interacting with alerts, manual verification of alerts falls by the wayside, and employees’ skills become rusty.

And when an advanced attack (one that AI wasn’t programmed to recognize) occurs, there will be no instinct or experience from a human being to identify the threat.

There is an aspect of cybersecurity that AI cannot replicate, and it comes from many years of experience in the field and the development of one’s sixth sense. You know when something does not feel right.

You know to zig when everything tells you to zag. This is not something that comes from training data or complex AI models. Consequently, if we rely on machines too heavily, we not only trust those machines but also fully eliminate our human safety net.

The fix? Don’t let AI be a replacement for good judgment. Treat it for what it really is: a super enthusiastic intern who’s fast with data, valuable, but not ready to perform tasks without supervision. Let AI help with tasks, but when it’s time for big decisions, you want experienced folks with real instincts to make the final calls.

3. AI can succumb to adversarial attacks, and its training data can be poisoned

Attackers can manipulate AI systems in ways that aren’t possible with traditional defense systems. One of the ways is to alter the data that is fed into the AI, often so subtly that it’s difficult to detect unless you know what to watch for.

By altering the training data in this manner, the goal of an adversary is to induce AI models to incorrectly classify threats. When this happens, malware will be classified as safe, and as a result, will be able to bypass firewall security.

This is not theoretical; recent research has shown that adversarial attacks can weaken AI-based detection systems’ capability to classify malware, detect network intrusion, and perform many other critical functions.

Another significant and concerning attack method on AI is through “data poisoning”. In this attack, the adversary alters, inserts, or deletes training data that the AI uses to learn from to effectively cause the AI to learn to fail, but in a manner such that it is almost undetectable.

The bottom line? The AI that’s supposed to protect you could become your enemy. And quite frankly, you might not even notice anything’s wrong until it’s too late.

4. The black box problem makes AI hard to trust and audit

Most AI models, especially those deep learning ones, just spit out threat scores and alerts with zero explanation. So, security analysts are left in the dark. They either take the system’s word for it or just stare at a verdict without knowing what triggered it.

That’s a big problem. Analysts need to move quickly. They need to justify every call they make to their teams, to executives, to auditors, and most often these days, to regulators.

If the AI won’t explain why it flagged something, how do you then tell the board or the compliance team why you missed a breach? It turns post-incident investigations into guesswork, and compliance reporting becomes like filling out a Mad Lib.

Regulators are paying attention to it, too. The EU AI Act literally requires high-risk AI systems to provide actual info about how they make decisions. The NIST AI Risk Management Framework? Same requirement. Explainability is not negotiable if you want people to trust your AI.

If you’re shopping for AI security vendors, treat explainability like a dealbreaker. Don’t just take their word for it; ask them to show their work. If all you get are vague defensive answers, that’s a big red flag.

5. AI in cyber attacks: attackers are using the same tools

The same AI tools defense teams rely on are also becoming a hacker’s best friend. Attackers are weaponizing AI against the systems it’s meant to defend, and they’re moving fast.

According to Sift’s latest Digital Trust Index, AI-driven scams increased by nearly 456 percent in just a year. Over 82 percent of phishing emails are running on AI now. The latest research shows a 1,265 percent spike in generative AI-powered phishing. The FBI has also warned that criminals are using AI voices and text to impersonate government officials in credential-stealing sprees.

Here’s where AI in cyber attacks actually finds its use case:

AI-powered malware

Attackers use AI to create super malware, think malicious code that constantly rewrites itself to dodge your antivirus. Never the same twice, so it’s hard for the good ol’ signature-based detection to catch it. This kind of living malware can adapt its behavior to counter whatever defenses the host system throws at it.

AI-generated phishing

With AI, scammers can craft highly personalized phishing emails. Not those “prince in Nigeria” emails of decades ago with badly constructed grammar and misspelled words. No.

Now you get spotless grammar and perfect context, all tailored to whatever embarrassing secrets they harvested from your socials. And they push these out by the thousands with the help of AI, each custom-built for the target. Spotting them is almost as hard as finding a needle in a haystack unless you’re really on your game.

Deepfakes and voice clones

A two-second clip of your voice from TikTok is all attackers need to make a hyper-realistic audio or video of real people. (Read our guide to explore whether you should use TikTok or not.)

Once, an incident went viral of a parent who received a phone call and heard a voice that sounded exactly like her daughter’s voice, sobbing about a fake kidnapping.

The scammers demanded $1 million as ransom. The police later discovered the supposed kidnappers used AI to clone the voice. The same tricks are hitting businesses, too.

AI-generated voices of executives authorize six-figure wire transfers. Even in business email compromise attacks, AI has become the go-to tool for scammers.

Automated vulnerability scanners

AI bots now rifle through your network traffic, logs, and code at inhuman speed, mapping out weak spots. Attackers don’t even need much hacking skills anymore to pull off attacks.

So, is AI a cybersecurity threat? In the wrong hands, yes. That’s one of the biggest challenges the security industry folds are facing today.

6. Data privacy and hidden ownership risks

AI needs data, like mountains of it, to actually function well. Especially in cybersecurity, we’re not just talking about cat photos or weather trends. No, this is the real stuff: network blueprints, defense logic, logs of user behavior, and all those proprietary security processes security teams usually rely on. And once all that data lands in a third-party AI platform? You might be surprised by where it actually ends up.

A lot of commercial AI platforms require users to grant these super-broad licensing rights over submitted data. Depending on the terms, they can use your data to train their own models, hand it off to other partners, or just incorporate it into their giant datasets, sometimes without asking for your consent.

Sometimes there’s a checkbox to agree or disagree, but honestly, who reads those?

This kind of thing could leak your data without a single hacker being responsible. No malware needed. Just a boring little line buried in a terms of service document you probably didn’t even read before using the service. For companies handling regulated data, think patient charts, bank records, government files, that’s not just a privacy concern, it’s a compliance liability.

Bottom line? If you’re about to upload any sensitive info into a third-party AI tool, make sure your legal team actually checks those terms. If you haven’t done it yet, it’s already overdue.

7. Bias and ethical problems in AI decision-making

AI doesn’t discriminate intentionally. But it can discriminate systematically, and the outcome is just as harmful. It just kind of absorbs whatever data the creators fed into it during training, and if that data’s flawed?

The results can get ugly, fast. The system starts flagging folks who fit certain profiles (behavior, demography) or who just happen to live in a particular geographic location, creating a security posture that’s uneven or unfair.

And the numbers don’t lie. According to a study from the University at Buffalo, deepfake detectors were way more likely (39.1 percent of the time) to call real faces of Black men ‘fake’ compared to less than 16 percent for white women.

That’s not some minor calibration issue. It’s a structural flaw, one with actual consequences, especially where security or surveillance is involved.

8. High implementation costs and the talent shortage

Implementing robust AI-based cybersecurity solutions is costly for businesses today. Companies can’t simply purchase a subscription to a great piece of software.

Instead, they’ll need specialized hardware such as GPUs for both model training and inference, and expensive enterprise-level software licenses. A team of data scientists, AI security architects and ongoing maintenance is also a must-have.

A company that is large enough to bear these costs can do so without issue, but small companies with much less capital, generally speaking, will not be able to afford the high price tag associated with deploying a new model.

This leaves the capability divide between those companies that are large enough to afford the implementation of AI cyber fortresses vs. all the other businesses.

In addition, if your business can somehow manage to afford the needed hardware and software licenses, you will still need to hire or train people with the appropriate level of knowledge to effectively use the tools available.

Seeking out skilled professionals in AI security development is an extremely difficult proposition. The demand for these resources is off the charts, and the availability of these resources is virtually non-existent!

What typically happens is that companies will find themselves without sufficient resources to manage their tools, maybe due to a lack of skill level to do so. And thus, employees rely on luck to prevent a major mistake, much like attempting to operate a high-end telephone when you’ve never held one before.

The end result is that a company may erroneously believe its environment is very secure, and in reality, they only have a fancy doorknob on an open door.

9. AI creates a dangerous false sense of security

AI in cybersecurity sometimes works a little too well at making everyone feel cozy, and that’s actually terrifying. Companies invest a huge amount of money in some flashy AI security platform, and they begin to act like these tools have everything under control. Manual audits? Skipped.

Basic security hygiene, patch updates, access reviews, and employee training, reminding them how to spot phishing links, are ignored because they think AI is handling it. Human reviews? Those get kicked aside like they don’t matter. You really start slipping on the boring basics because of the illusion that “the AI’s on guard.”

The reality is that AI should just be one piece of the whole defense puzzle, not the only piece. It shouldn’t be a replacement for the good ol’ firewalls, access control, employee training, or incident response planning. Rely on AI too much, and you’re basically leaving a huge security gap, the kind sophisticated attackers know how to find.

10. AI models need constant updates to stay relevant

AI is not a set-and-forget solution that’ll just run on autopilot forever. The threat landscape is ever-changing. Brand new hacking techniques and new malware are popping up every day, and attackers keep getting smarter.

AI systems running on outdated threat data are tantamount to suicide in today’s cybersecurity world. If you don’t keep retraining, refining, and updating your models, you’re basically leaving the back door wide open for attackers to come and go as they please.

And, for real, maintaining these models isn’t just a little side job. It eats up a ton of time and resources, and you always need continuous monitoring. You also need someone watching for when your model accuracy starts receding before it actually exposes you to real danger. Without these, even a top-notch AI system you spent months building gradually turns into a security risk rather than a defender.

11. Prompt injection and LLM-specific vulnerabilities

Several organizations have started to include large language models (LLMs) within their security processes. They use it to draft reports, assist in triaging alerts, and support compliance documentation. This is exciting, but there are also risks associated with using LLMs, specifically the introduction of new types of vulnerabilities, such as prompt injection.

According to OWASP’s recent top ten list for application security threats, prompt injection is ranked as the number one threat to LLM applications. Prompt injection attacks are often very subtle and can be engineered to manipulate the large language model through crafted input as intended and subsequently hijack or subvert the model’s intended function.

For example, if the LLM model is fed with a carefully constructed text string, it could cause the LLM model to leak confidential information or allow it to operate outside of its safety protocols.

There are two types of prompt injection: direct and indirect. In the case of a direct prompt injection, the input contains a command that instructs the LLM model to reveal restricted data.

Conversely, an indirect prompt injection hides within external content (i.e., within a resume, or random PDF document, or in a web page, etc.) that the model processes or acts on.

Prompt injection is just one of the many dangers AI presents in cybersecurity; model theft and data poisoning are also serious risks. A recent security audit found these three as the top dangers of AI in cybersecurity. It recommends the control of access based on employee roles, strict input validation, and schedule real security audits if you want any chance of mitigating these risks.

Model tampering is another growing concern for enterprises that fine-tune LLMs on their internal data. Maybe someone inside tweaks the training data, or some data pipeline gets hijacked, and the model starts misbehaving.

The worst part? No one might suspect until it blows over. So, sure, AI is good, but it’s not magic. Use it only as support for your human security team, not a substitute.

Examples of AI in cybersecurity

These days, both defense and offense teams use AI. Here are some examples of how these tools operate on both sides of the threat landscape.

Defensive AI applications:

- Behavioral analytics platforms that monitor login habits to flag unusual patterns. If someone randomly logs in from Tokyo at 3 AM but lives in Cleveland, red flags go up. Accessing files late at night, lateral movements across networks like you own the place? The system flags those.

- Email security with AI that analyzes sender behavior. Weird sender? Suspicious link? Odd turn of phrase? It blocks the email before you even have a chance to click “open.” No more “You’ve inherited a million dollars from Prince So-and-So” in your inbox. (Check our list of the top free secure email services for your use.)

- Then you’ve got EDR (endpoint detection and response) tools (like antivirus) using machine learning to help them catch fileless malware or those zero-day attacks that basic scanners would totally miss.

- Finally, SIEM systems powered by AI, sifting through a gazillion logs. They pick out the real threats from all the noise. I mean, who wants to manually comb through hundreds of logs just to find one real alert?

Offensive applications of AI (AI in cyber attacks):

- GenAI crafts scam emails that actually sound like your boss wrote them. The grammar is usually so polished that you might just click if you’re not careful.

- Hackers have password-cracking tools powered by AI. They don’t just randomly guess passwords; they analyze stolen data, play the odds, and identify patterns to bring out your likely password combo first.

- AI-driven voice cloning tools used in social engineering attacks can mimic the voice of a company executive to scam people. There have been cases where attackers fake “executive” phone calls authorizing wire transfers of huge amounts of money.

- And don’t forget AI-mutating malware that rewrites itself every time it lands somewhere new, old-school signature detection antiviruses find it hard to catch those.

Bottom line? The line separating these two sides of AI usage is actually thinner than most of us realize. The same AI models serving defensive purposes can also serve offensive purposes.

Is AI a threat to cybersecurity jobs?

This is one question that pops up all the time on LinkedIn, conference panels, AI and cybersecurity articles, you name it. And honestly, the answer is not that black and white.

AI isn’t eliminating cybersecurity jobs. If anything, it’s reshaping them. If your job revolves around repetitive tasks like slogging through endless log files or sifting through tier-1 alerts and basic vulnerability scanning, AI is automating all that.

Meaning, there’s no need for a human to do those. But actual security work? That’s not dying. The demand for human security experts is not dropping; there are even more jobs in security now.

The difference? Now you gotta know your way around AI itself. Companies don’t just want someone to clock vulnerabilities. They want someone who understands how AI works, who can spot when the system’s failing or being manipulated, sniff out AI-powered attacks, and build rules for how all these AI tools should actually be governed.

If you could skate by before with just the basics, better hit the books, because now you need to get more skills and expand your knowledge. At the end of the day, it’s all about adapting, or you’ll be left behind.

Security pros aren’t on the AI chopping block, but the job market wants folks with sharper, wider skills. These openings aren’t going anywhere; they’re just crankier and tougher than ever.

How to stay safe from AI risks

AI security risks aren’t just for businesses; It hits regular people too. Anyone using AI tools or apps should care. So don’t just go with the flow.

Both individuals and big companies need to be proactive in locking things down, checking what’s happening regularly, and not counting on wishful thinking. Hope’s great, but planning’s way better. Here’s what actually helps:

1. Audit your AI security tools regularly.

If you’re using any AI tool for security purposes, review them from time to time—do vulnerability checks, bias reviews, all of it. Don’t just wait until something goes wrong.

And don’t relax, believing your internal team knows everything about the audit, bring in outside folks if you have to. You want to find the dusty corners before someone with bad intentions does.

2. Limit what sensitive data goes into AI tools

People keep shoving sensitive info into AI tools like it’s “totally safe,” it’s not. We’ve seen actual stories of staff of big organizations dumping confidential business info into random chatbots without a second thought.

One healthcare provider submitted a patient’s personal information to an AI tool to draft a letter, potentially violating HIPAA in the process. A practical rule? Don’t put anything you won’t be comfortable seeing in a breach into a third-party AI tool.

3. Invest in AI data security

AI’s only as clever as the data you feed it, and that training data? You had better guard it like it’s your everything. Use encryption, get reliable backup systems, and keep a close watch on those data pipelines for anomalous activity.

Implement data versioning and source validation to catch poisoning attempts on time. Seriously, treat the networks feeding data into your AI the same way you’d treat any other critical infrastructure.

4. Update your AI tools

AI tools need the same strict maintenance culture as any other system. And that means regular updates. Don’t put it off. Install the patches, get those vendor updates, and don’t assume your sparkly new AI makes you immune to malware. Run your antivirus too.

5. Adversarial training helps harden AI models

Hitting your model with tough scenarios, like teaching them to recognize manipulation attempts, before you let it loose, makes them a tad bit stronger and prepares them for the threats they might encounter.

If you ask your vendor how they stress-test their AI and get vague answers, push harder. If they still fumble? Maybe find another vendor who actually knows what they’re doing.

6. Train your people, not only on your models

Your employees remain one of the major attack surfaces, and AI makes phishing attacks look scarily authentic now. Teach your team to recognize what AI-generated phishing attempts look like (they usually look too good; contextually believable and personalized).

Let them know how to verify unexpected requests via secondary channels and when to report something that feels off, even if they can’t just explain why. The old-school “double-check everything” approach is more useful than you think.

7. Make an incident response plan just for AI screwups

AI will mess up sometimes; it’s inevitable. Doesn’t matter if you did everything right. Have a plan ready for how to react to AI failure scenarios.

Clearly define each person’s role in a crisis, who’s in charge of containment, who investigates the failure, and remediation at each stage, before you need to act.

8. Involve humans in critical decision-making, not AI alone

AI is meant to augment human skills, not to displace it. Don’t let it run things solo, let humans be part of the review process. All high-stakes decisions, incident classification, access escalation, threat prioritization, etc, should involve human evaluation, not just algorithmic outputs. Have experienced folks reviewing the big calls, and let their insights loop back into improving the system.

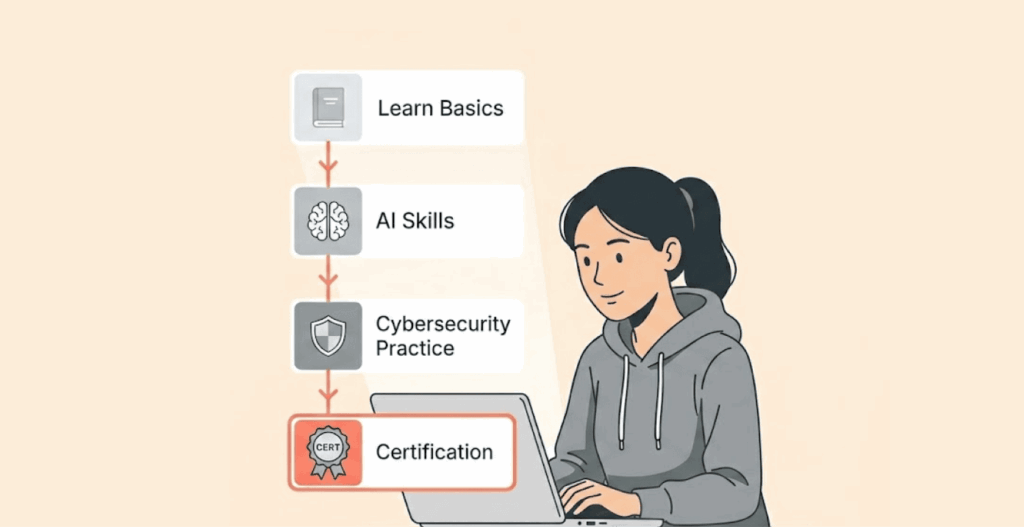

Steps to become a certified generative AI in cybersecurity professional

Want to become a Certified Generative AI in Cybersecurity expert, or are you looking to validate skills you’ve already built through experience? Good call. Getting a professional certificate is one of those things that can actually open doors these days, especially when everyone is acting like an “AI expert” on LinkedIn. Here’s how to approach it practically.

1. Validate your expertise with a recognized certification

Generative AI in cybersecurity professional certification programs, like the ones offered by firms like GSDC, basically put a stamp on your competencies.

Like saying “Hey, this person actually knows how to secure AI systems, detect AI-specific threats, and manage the risks that come with using generative AI in enterprise environments.

A lot of companies hiring right now are just figuring out how wild the AI-security world actually is, so a legit credential can set you apart from everyone else.

2. Learn from practitioners rather than books only

Do not just go for programs where you only memorize slides for exams. You need ones that include hands-on labs, expert-led sessions, and people showing you what the actual attacks look like in real-world scenarios, how to stop them, and how to securely deploy a model.

Why? Because when you’re on the job, the question isn’t “What’s in chapter 12?” It’s “Can you protect us at 3 a.m. when some threat alert pops up on the dashboard?” Learn from people actually fighting the fight, not just folks reading research papers.

3. Actively build your professional network

Networking and sharing knowledge will keep you up to speed with AI & cybersecurity, and collaborating with others in these fields is the primary way to do this.

Participating in virtual events and joining professional associations, and connecting with your peers who are actually working on these problems, will give you practical insights that no certification can replace. Most of what’ll save your job someday comes from real conversations, not from a textbook. Be where the conversations are.

FAQs

One major risk includes analyst complacency from over-reliance and trusting AI. Another risk is adversarial attacks, plus there’s also a possibility of someone messing up the training data (what they call data poisoning). There is a high volume of false positive alerts, and most AI systems lack explainability in their decision-making. Data privacy and ownership risks when using third-party AI solutions are another issue.

Yes, AI has made cyberattacks easy for attackers. Attackers can create personalized phishing emails that look so legit you’d hardly be able to tell it’s fake. Malware? AI can create polymorphic malware that rewrites its code to dodge detection. Then there’s AI voice and face cloning, where scammers pose as someone else (maybe your boss or mom) to deceive you into sending money or authorizing a transaction. Plus, credential stuffing and vulnerability scanning can now be done in minutes using AI. Reports have revealed that AI-powered phishing has increased significantly, fueled by widely accessible generative AI tools.

Well, not exactly. The routine, repetitive, high-volume tasks? AI can take over those tasks because companies are using AI to automate such tasks. But the demand for human expertise, in critical areas like AI oversight, threat hunting, advanced analysis, and governance, is not declining; it’s rising. Professionals who actually know how AI and security work have nothing to worry about. Companies actually want more humans who can manage and question the results AI churns out, and who can interpret its weirdness when things go sideways.

The key here is regular auditing of AI systems with penetration testing and bias reviews. Control what sensitive data you feed into AI platforms. Harden training data pipelines to prevent data poisoning. Importantly, train employees to spot AI-generated social engineering, use adversarial training to harden your models, have a tailored plan for dealing with AI failures, update software regularly, and have humans oversee AI outputs.

Most AI models spit out alerts but won’t give you the ‘why’ behind them. So when security people get an alert, they’ve got no clue what triggered it. That’s a problem when it comes to alert validations, or for forensic experts digging into incidents, or proving to regulators that you complied with rules. Now, with new laws like the EU AI Act and the NIST AI Risk Management framework requiring explainable outputs from AI systems, especially those used in high-risk domains like security.